A design of Intelligent Public Trash Can based on Machine Vision and Auxiliary Sensors

- DOI

- 10.2991/jrnal.k.211108.009How to use a DOI?

- Keywords

- Garbage classification; machine vision; deep learning; auxiliary sensors

- Abstract

To improve the accuracy of front-end recognition in the garbage classification process, the recognition accuracy of the automatic garbage classification system designed based on machine vision is significantly higher than that of the traditional smart garbage can. However, the recognition accuracy rate for irregular garbage is low. To solve such problems, four types of auxiliary sensors are added to the trash can, through the mutual cooperation between the sensors, combined with the results of machine vision recognition for comprehensive judgment, greatly improving the recognition accuracy of irregular trash. It shows the broad application prospects of the research results of this paper in waste classification and environmental protection.

- Copyright

- © 2021 The Authors. Published by Atlantis Press International B.V.

- Open Access

- This is an open access article distributed under the CC BY-NC 4.0 license (http://creativecommons.org/licenses/by-nc/4.0/).

1. INTRODUCTION

With the development of society and the increasing improvement of people’s living standards, society is paying more and more attention to the problem of garbage classification, and various regions have also implemented relevant garbage classification policies. However, due to various types of garbage and people’s poor awareness of autonomous classification, the classification effect is difficult to achieve the expected effect [1]. And as a result, intelligent sorting trash bins based on various technologies have emerged.

Most the intelligent sorting trash can lies in automatically identifying the type of trash. At present, with the maturity of machine vision technology and a wide range of applications, an intelligent classification of trash cans based on this technology has been born, which can realize certain garbage identification and automatic classification. However, due to certain technical limitations of machine vision, it is impossible to recognize all garbage. For example, it is difficult to recognize the same garbage under different shapes.

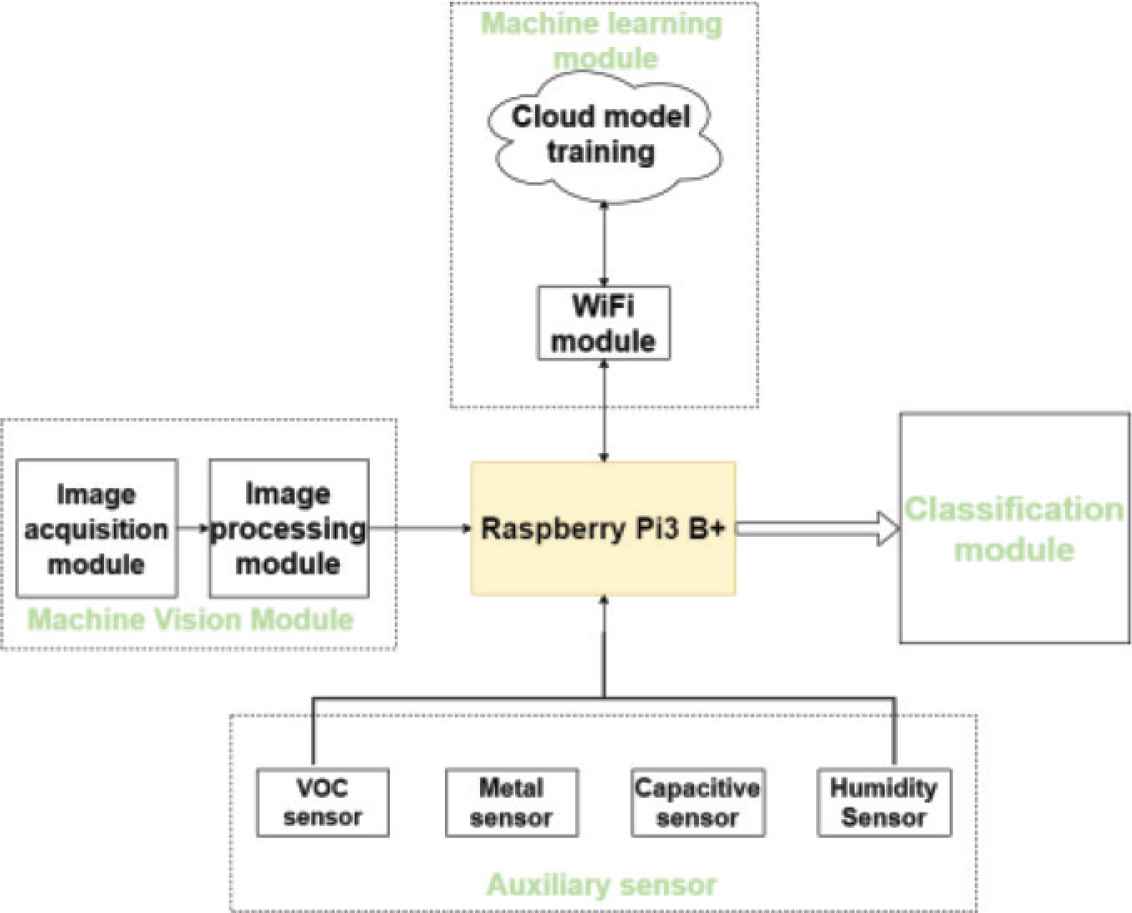

In summary, the smart trash can not only needs to be based on machine vision, but also needs to be supplemented when machine recognition cannot be effective. Therefore, a smart public trash can based on machine vision and auxiliary sensors is proposed. In addition to realizing to recognize garbage and automatically classify it by machine vision, sensors will also be installed to assist in identifying garbage to solve problems such as the same garbage classification of different shapes. At the same time, enhanced learning will be added to realize the self-learning of the trash can, so as to achieve the goal of continuously increasing identifiable types.

2. OVERALL DESIGN SCHEME OF THE SYSTEM

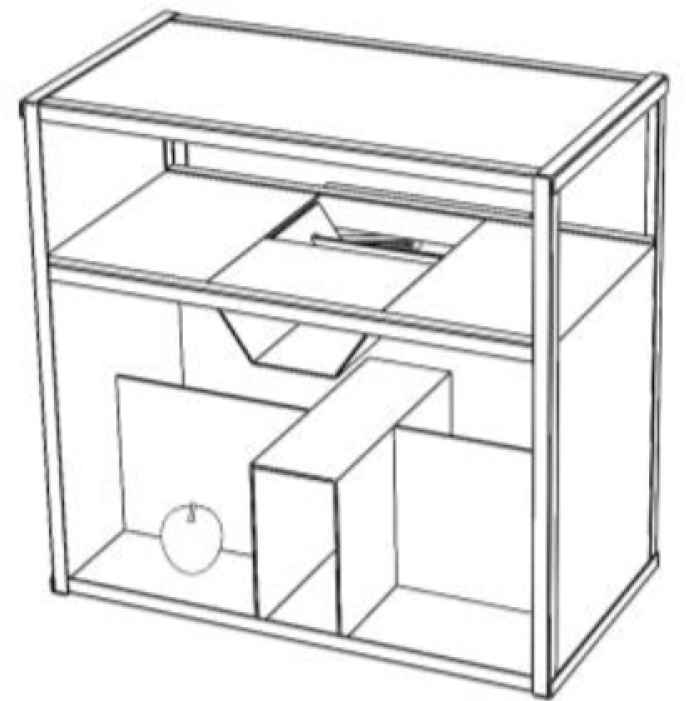

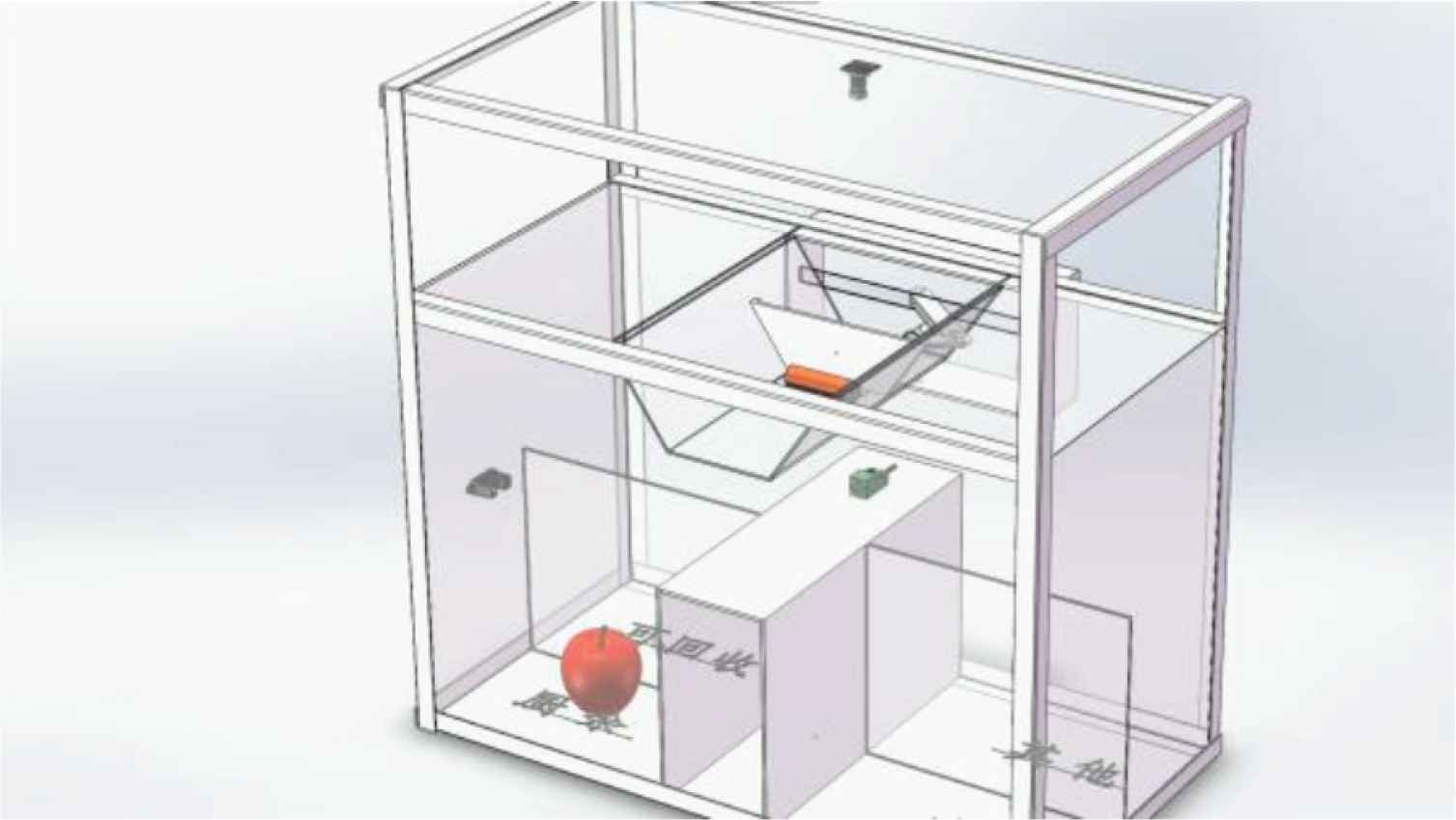

According to actual application scenarios and market requirements, combined with machine vision, sensor detection technology, and wireless communication technology, this paper designs a smart public trash can based on machine vision to realize automatic garbage sorting and machine learning (continuously increasing the number of identifiable types), the classification of different states of the first class of garbage and the real-time monitoring of garbage filling. The structure diagram of the trash can is shown in Figure 1.

Trash can structure diagram.

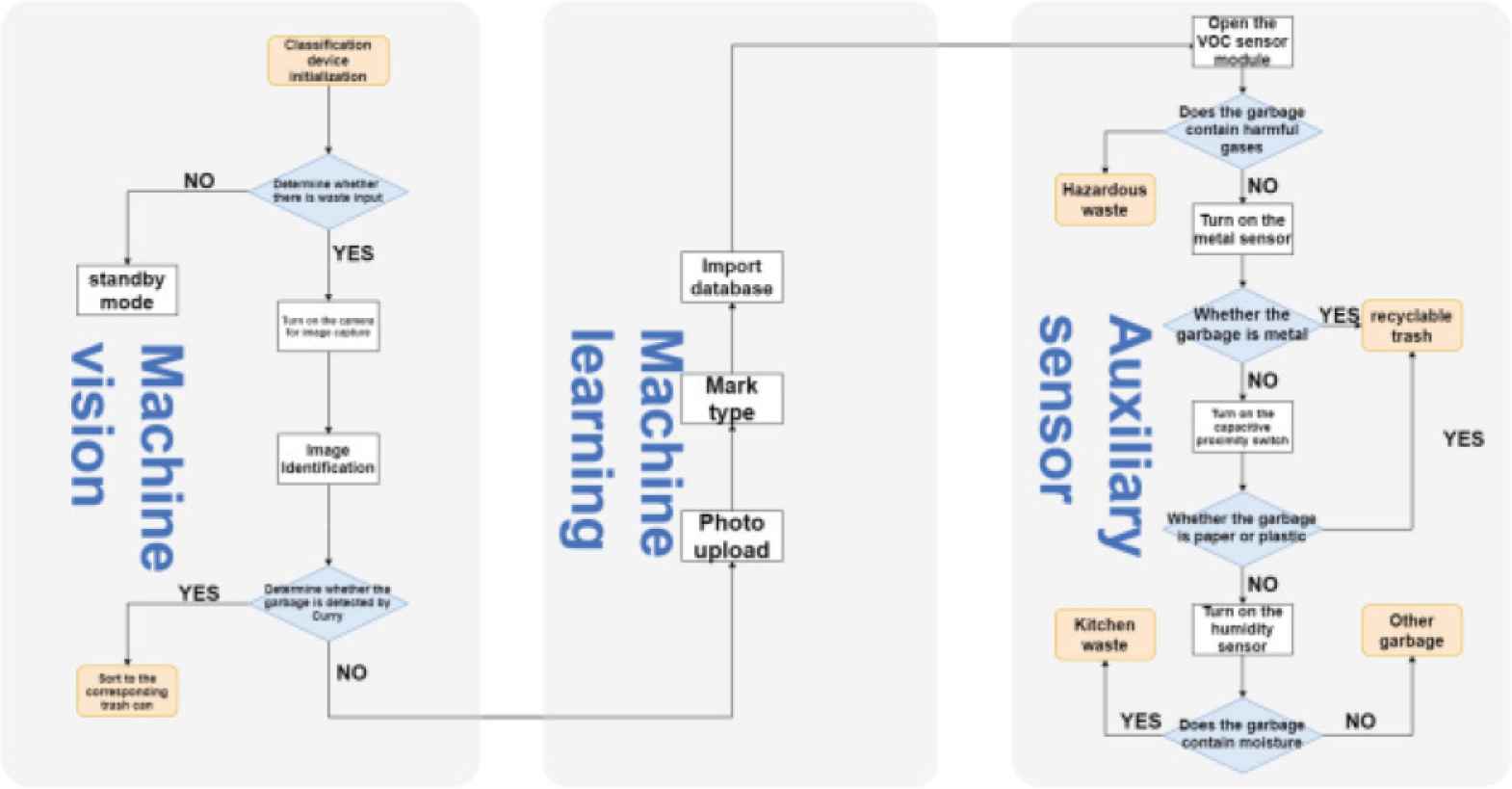

When the smart trash can detects that the rubbish is input, the trash can uses a visual camera to capture and identify the dropped rubbish [2]. Then compare and analyze the data in the database to determine the type of garbage, and then use its own mechanical structure to sort it into the corresponding garbage bin. The flow chart of the intelligent public trash can based on machine vision is shown in Figure 2.

The Overall flow chart of intelligent public trash bin based on machine vision.

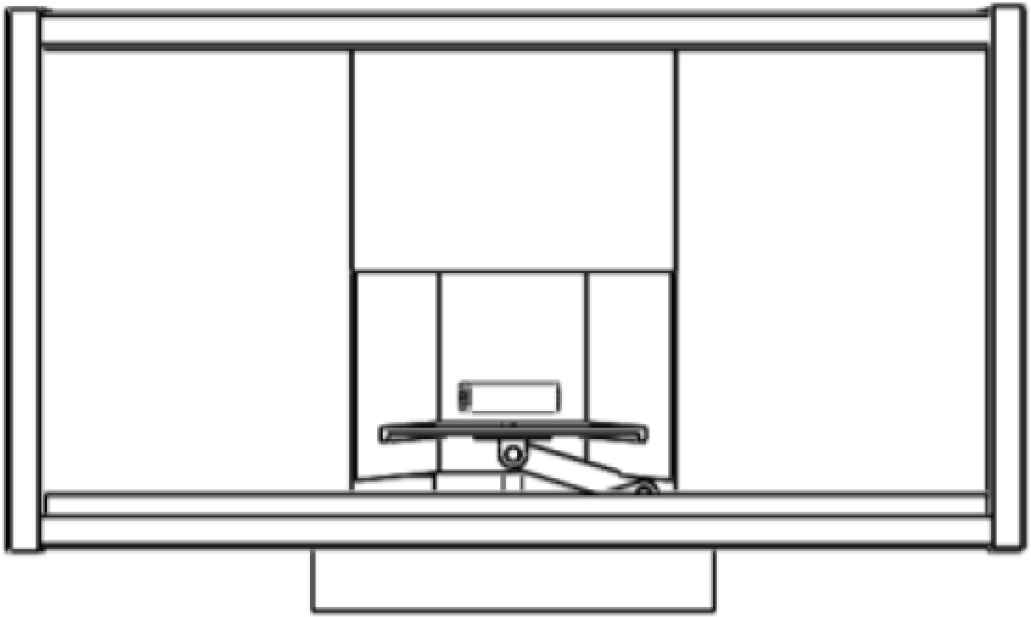

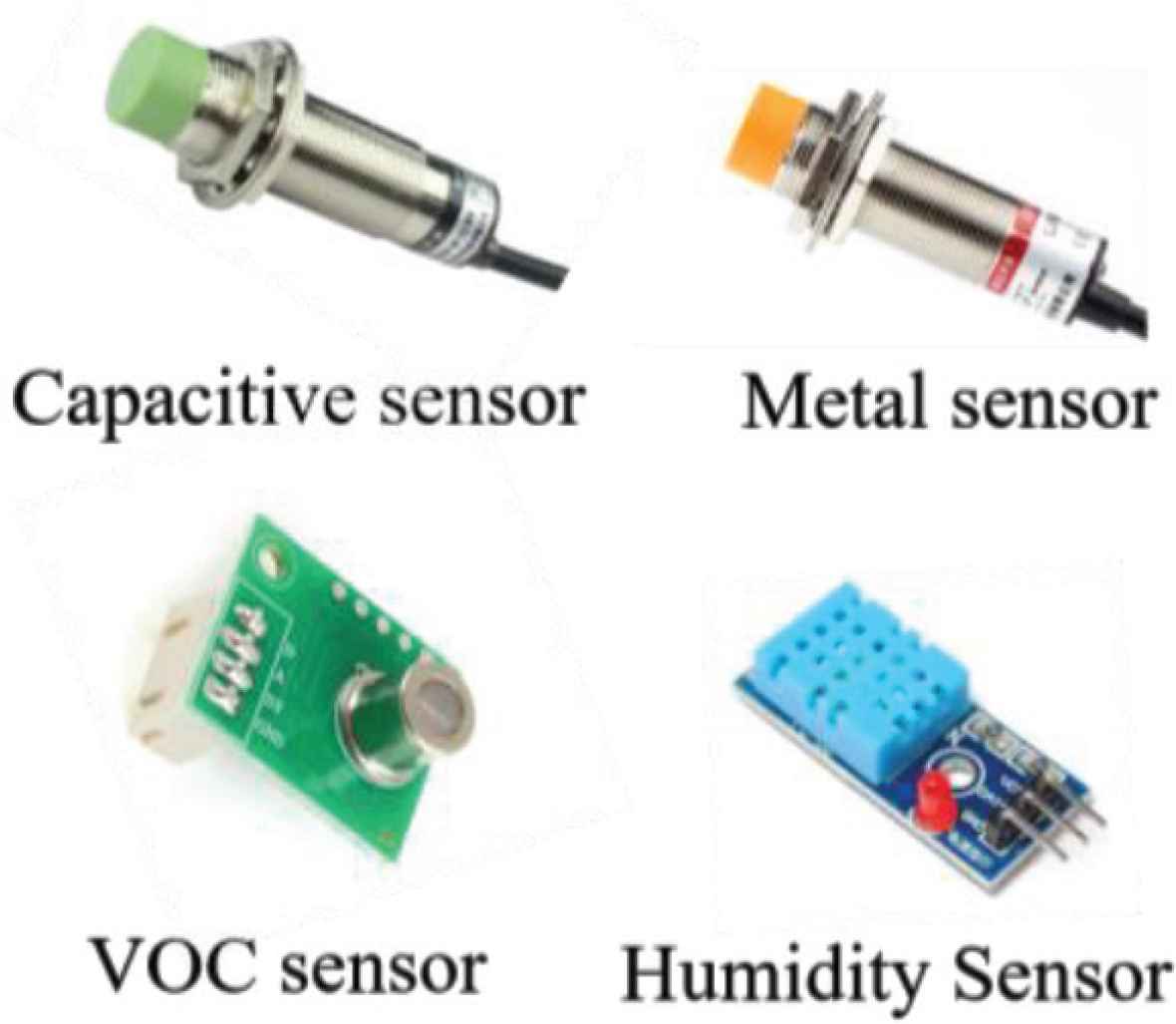

For the unrecognizable garbage, the trash can will work through the interaction of metal sensors, humidity sensors, Volatile Organic Compounds (VOC) odor sensors and capacitive sensors to sort the garbage into the corresponding garbage cans, mainly to solve the problem of garbage occupying the identification position. The location of garbage disposal is shown in Figure 3.

Garbage placement.

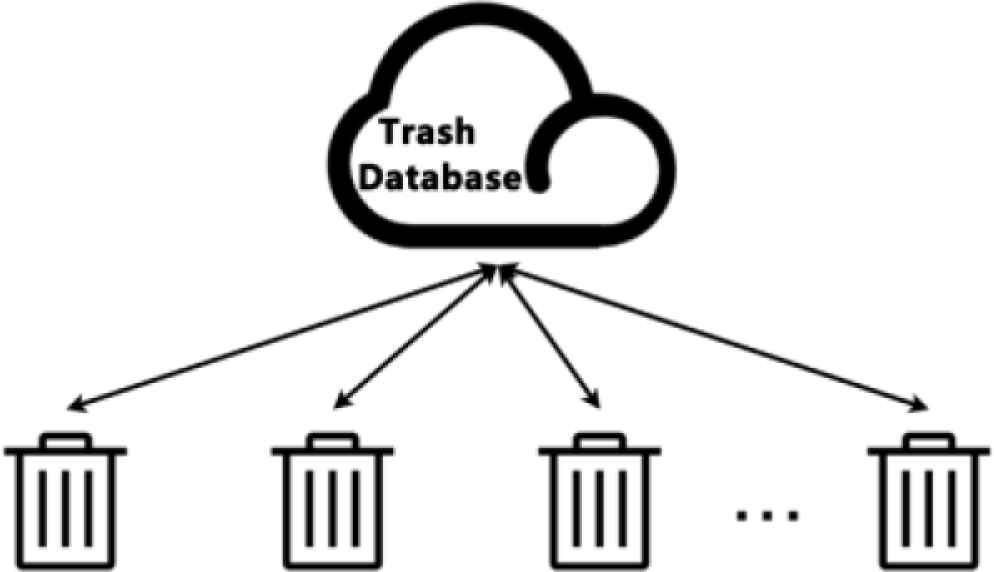

At the same time, the unrecognizable garbage is photographed, uploaded to the server, and re-trained to determine the type of garbage. When the garbage is recognized again next time, the garbage classification will be performed directly through machine vision. At the same time, each trash can will share data, which can greatly improve the learning speed of the trash can and shorten the learning cycle. The data sharing topology is shown in Figure 4.

Data sharing topology diagram.

3. HARDWARE SYSTEM DESIGN

The intelligent sorting trash can, the box body has a top plate, a camera is installed on the lower surface of the top plate, and the box body is provided with a plurality of sorting compartments for collecting different types of garbage, and the sorting compartments are arranged oppositely to form two rows; The middle part of the sorting cabin is provided with a garbage flipping plate that can be driven to turn left and right. The garbage flipping plate is a trough-shaped structure that can accept garbage. The trough-shaped structure is provided with a push plate for pushing the garbage to the front end. The structure diagram of the smart trash can is shown in Figure 5.

Smart trash can structure diagram.

3.1. Controller

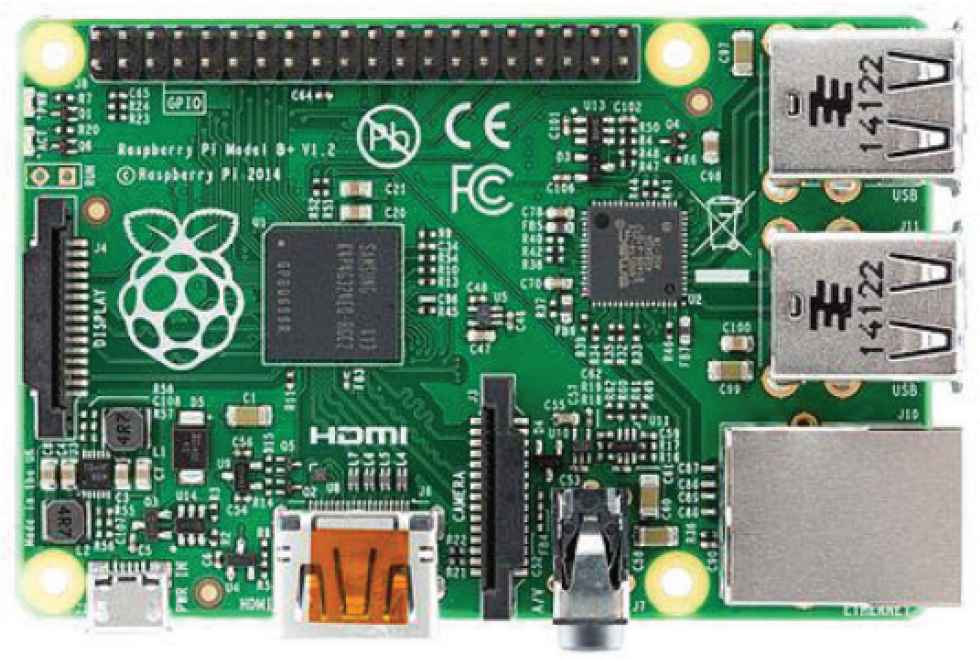

Raspberry Pi 3B+ is a powerful embedded hardware, based on Arm-based computer motherboard, equivalent to the smallest personal computer, not only small in size, but also powerful. Image processing can be realized after an external camera, and the mechanical structure is also controlled by Raspberry Pi 3B+. Raspberry Pi 3B+ and hardware diagrams are shown in Figures 6 and 7.

Raspberry Pi 3B+.

Hardware diagram.

3.2. Auxiliary Sensor

Aiming at the problem that machine vision has a low recognition rate for specific types of garbage, the intelligent public trash can designed in this paper adds sensor-assisted recognition on the basis of machine vision. Specific sensors are: VOC sensor, metal sensor, capacitance sensor and humidity sensor. The auxiliary sensor is shown in Figure 8.

Auxiliary sensor.

4. ALGORITHM DESIGN

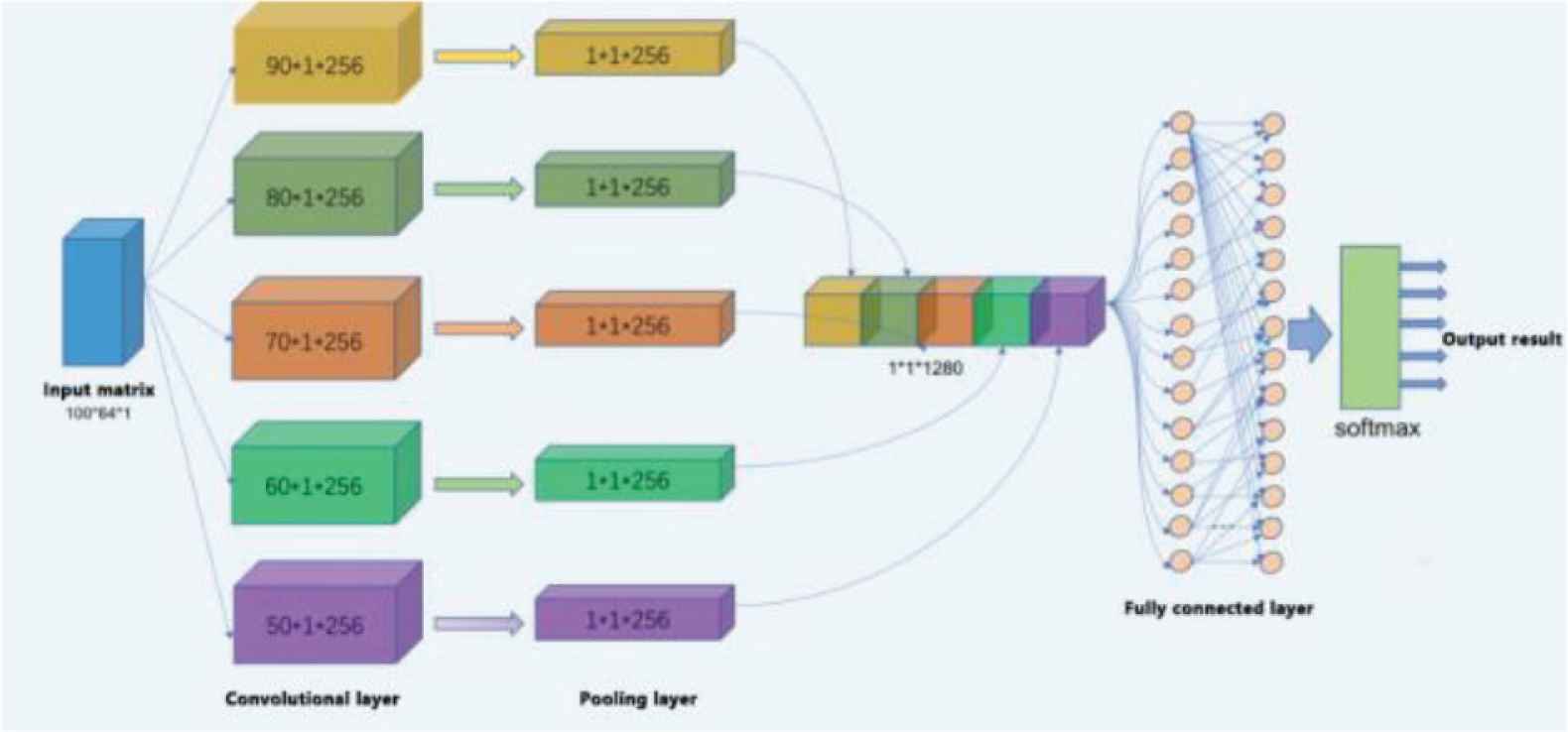

Convolutional neural network is a feed-forward neural network, as shown in Figure 9, including input layer, convolution layer, pooling layer, fully connected layer and Softmax output layer [3]. The neurons in each layer are only connected to the previous one. Layers are connected. The system uses the Inception v3 network as the feature extractor. On the basis of keeping its original weight, the neural network is built and retrained.

Inception v3.

5. EXPERIMENTS AND RESULTS

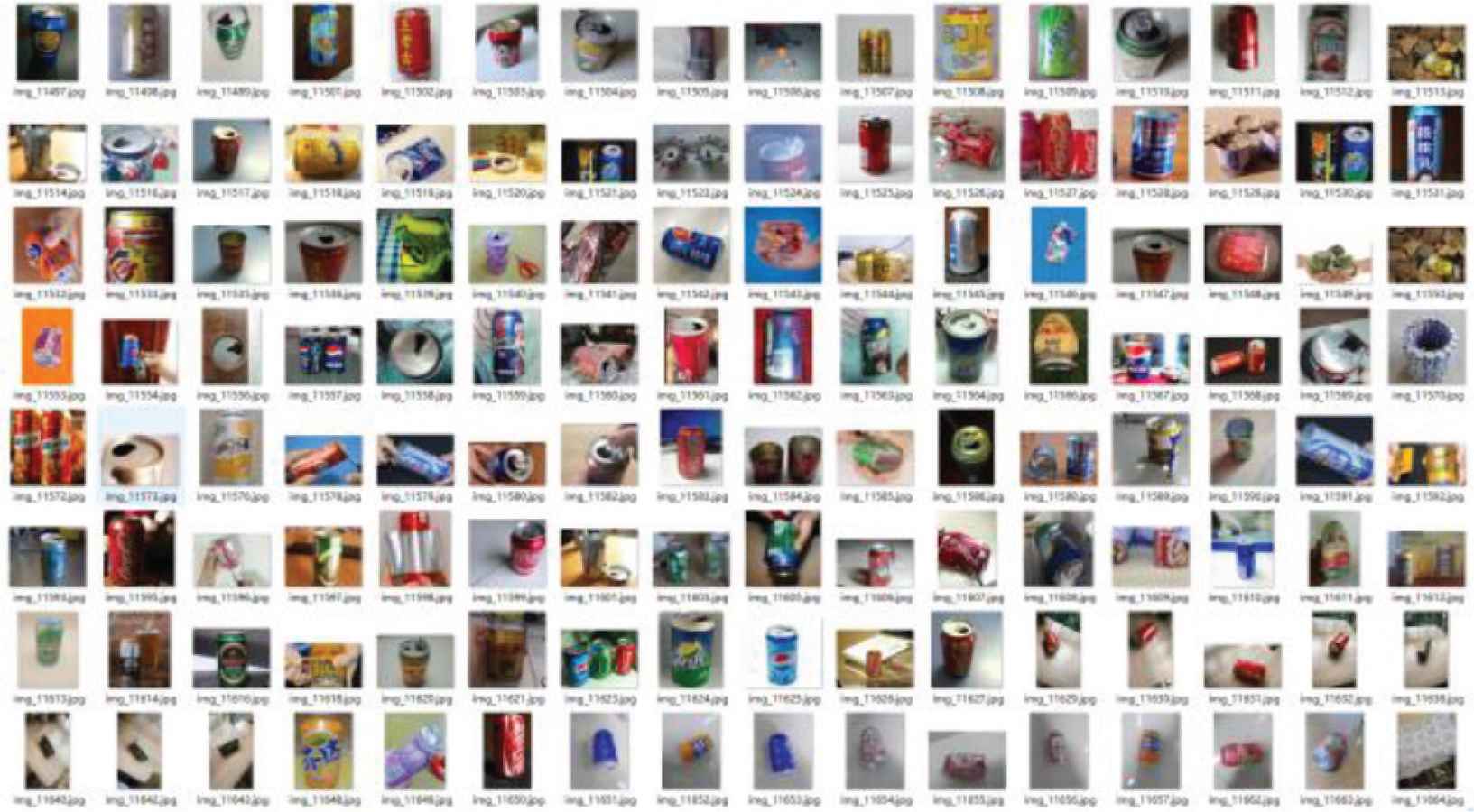

The system’s experimental identification objects selected six common types of garbage in daily life: cans, plastic bottles, milk cartons, paper cups, paper balls, and batteries. To obtain the data set for training and testing the convolutional neural network, the project team obtained it in two ways: On the one hand, the raspberry pi wide-angle camera was used to take pictures of junk items, and 1500 pictures were taken from different backgrounds and angles; On the other hand, 1500 images that meet the requirements were collected from the Internet. Therefore, the original data set has a total of 3000 images, 500 for each type of garbage. After that, the tags of the data set are manually classified, and different types of images are stored in different tag folders. Finally, the data set (Figure 10) is divided into training set and test set according to the ratio of 4:1 for neural network training.

Data set.

5.1. Neural Network Training

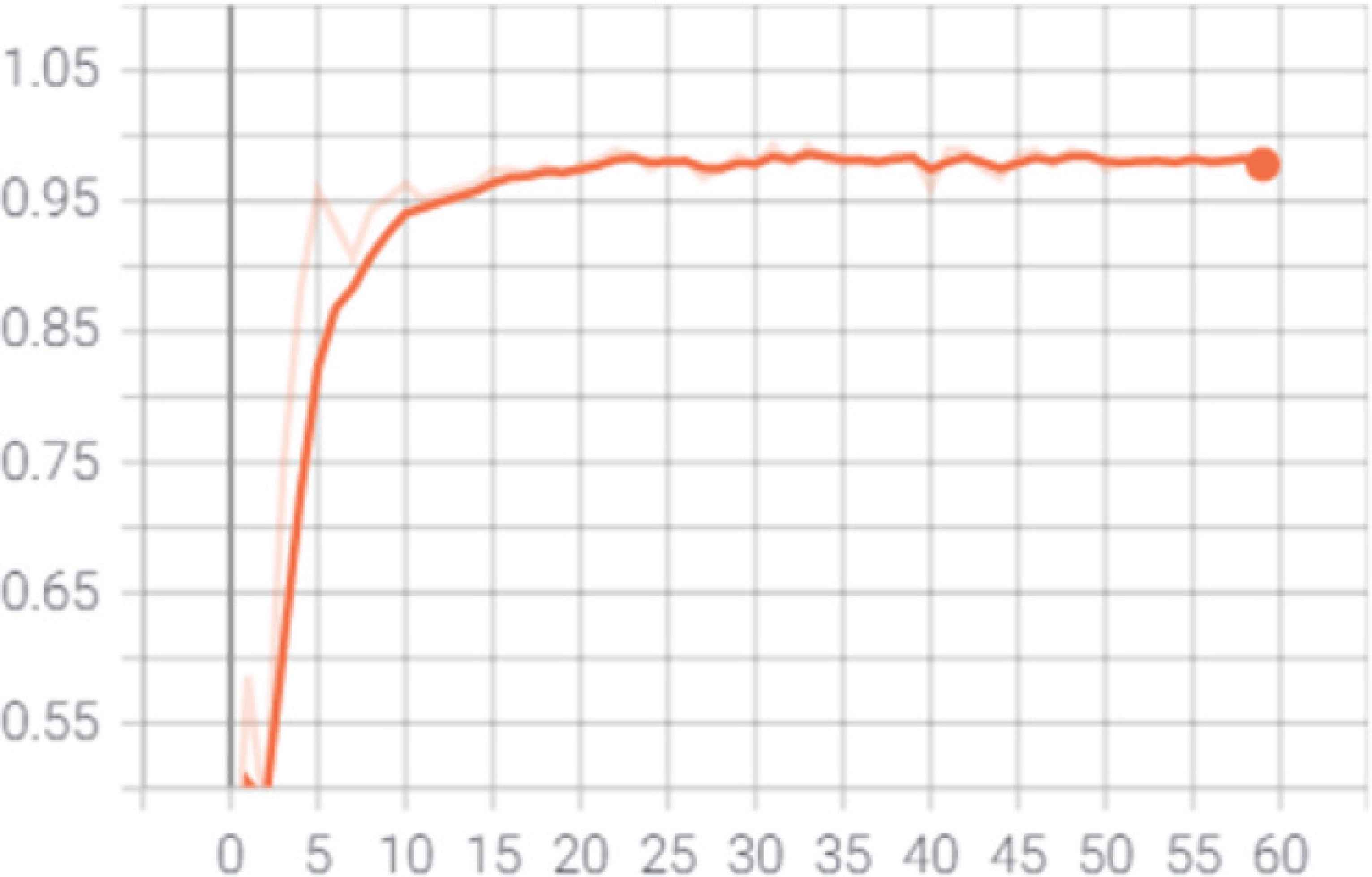

The number of training is designed according to the size of the training set. Each training is 100 times as a stage, and each training stage outputs the result once, that is, the average accuracy rate of recognition is output once through the above-mentioned test set test, and the accuracy rate changes with the number of training times as shown in Figure 11. From this figure, it can be found that as the number of training increases, the recognition accuracy will gradually increase and eventually converge to 1. Continue to increase the number of trainings has no significant effect on the accuracy, indicating that the neural network has been trained at this time.

Training result.

5.2. Network Model Accuracy Test

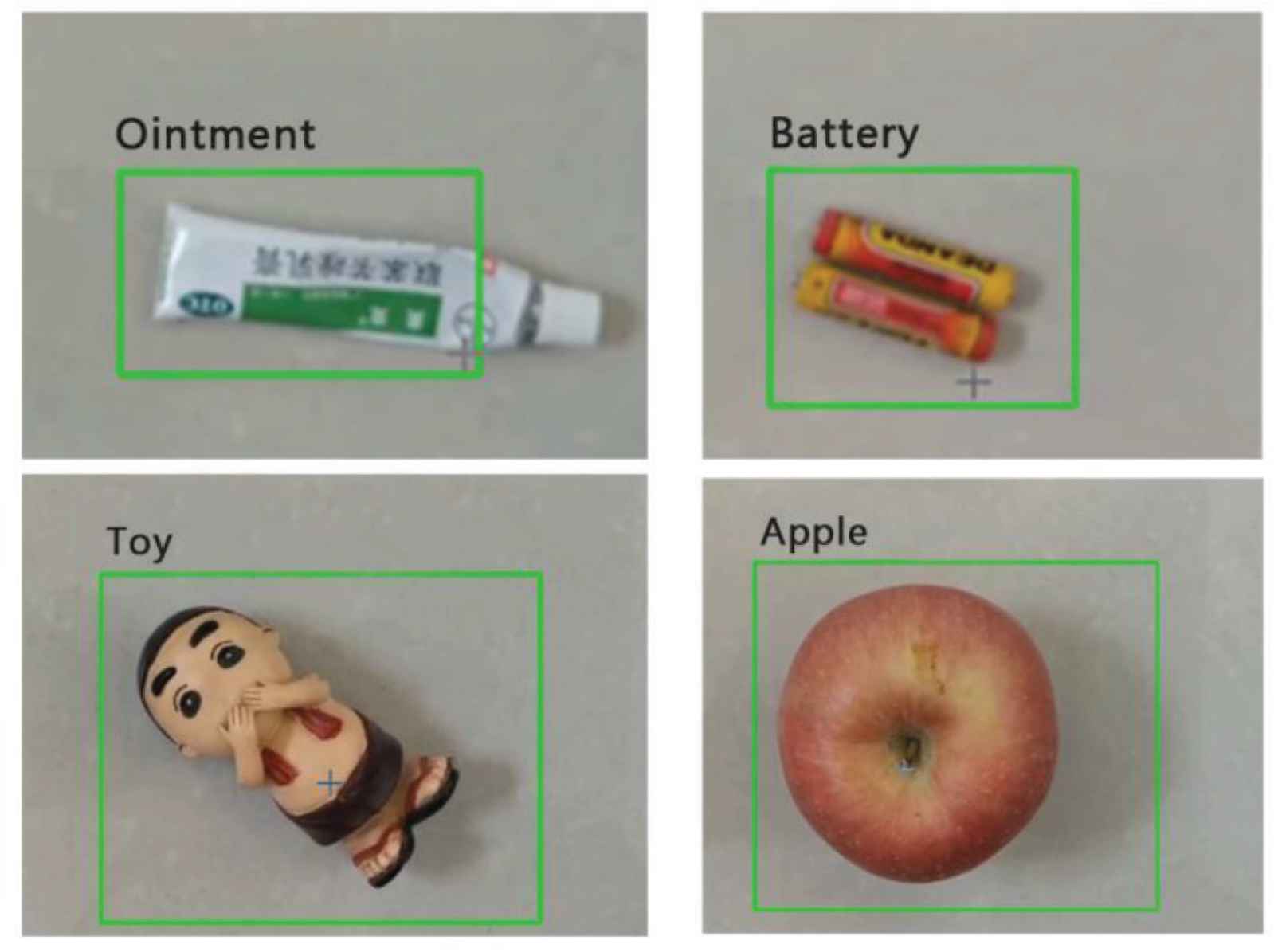

This verification experiment conducted experiments on six commonly used types of garbage. For each type of garbage, 10 representatives with obvious appearance differences were selected, and 100 throws were made at different angles, sizes, and different degrees of damage. The classification results were recorded and the specific data was recorded. As shown in Table 1, the recognition results are shown in Figure 12.

| Type of garbage | Recognition correct times/time | Average recognition rate (%) |

|---|---|---|

| Can | 87 | 87 |

| Plastic bottle | 92 | 92 |

| Milk carton | 90 | 90 |

| Paper cup | 94 | 94 |

| Paper ball | 93 | 93 |

| Battery | 95 | 95 |

Recognition result

Recognition result.

It can be seen from Table 1 that this system has an accuracy rate of over 87% for the recognition of common six types of garbage in actual use, and from the appearance model of the trash can in this experiment, it can be seen that the size of the trash can is small and meets the expected design requirements. It is also possible to increase the types of garbage identification by expanding the training set.

6. CONCLUSION

Aiming at the problem of garbage classification, this paper proposes a classification method based on the combination of machine vision and auxiliary sensors; designing an automatic garbage classification hardware system with a recognition accuracy of 0.87. Realize the automatic classification and recycling of common garbage in daily life.

In the research process of this article, we found that there are some problems worthy of further digging. First of all, we can expand the data set to realize the identification of more garbage. Secondly, it is possible to study and design other structures of garbage collection devices to meet the sorting needs of more target categories.

CONFLICTS OF INTEREST

The authors declare they have no conflicts of interest.

ACKNOWLEDGMENTS

The research is partly supported by the Project of Tianjin Enterprise Science and Technology Commissioner to Tianjin Tianke Intelligent and Manufacture Technology Co., Ltd (19JCTPJC53700). It is also supported by the Industry-University Cooperation and Education Project (201802286009) from Ministry of Education, China.

AUTHORS INTRODUCTION

Mr. Longyu Gao

He majored in Automation in College of Electronic Information and Automation, Tianjin University of Science and Technology. He learned SCM and data crawler and other related knowledge, and achieved certain results in relevant professional competitions.

He majored in Automation in College of Electronic Information and Automation, Tianjin University of Science and Technology. He learned SCM and data crawler and other related knowledge, and achieved certain results in relevant professional competitions.

Dr. Fengzhi Dai

He received an M.E. and Doctor of Engineering (PhD) from the Beijing Institute of Technology, China in 1998 and Oita University, Japan in 2004 respectively. His main research interests are artificial intelligence, pattern recognition and robotics. He worked in National Institute of Technology, Matsue College, Japan from 2003 to 2009. Since October 2009, he has been the staff in Tianjin University of Science and Technology, China, where he is currently an Associate Professor of the College of Electronic Information and Automation.

He received an M.E. and Doctor of Engineering (PhD) from the Beijing Institute of Technology, China in 1998 and Oita University, Japan in 2004 respectively. His main research interests are artificial intelligence, pattern recognition and robotics. He worked in National Institute of Technology, Matsue College, Japan from 2003 to 2009. Since October 2009, he has been the staff in Tianjin University of Science and Technology, China, where he is currently an Associate Professor of the College of Electronic Information and Automation.

Miss. Zhiqing Xiao

She majored in Automation in College of Electronic Information and Automation, Tianjin University of Science and Technology. She has learned MCU programming, machine vision and other related knowledge, and has made certain achievements in related professional competitions.

She majored in Automation in College of Electronic Information and Automation, Tianjin University of Science and Technology. She has learned MCU programming, machine vision and other related knowledge, and has made certain achievements in related professional competitions.

Mr. Jiangyu Wu

He majored in Electrical Engineering and Automation in College of Electronic Information and Automation, Tianjin Univ-ersity of Science and Technology. He learned computer vision and machine learning and other related knowledge, and achieved certain results in relevant professional competitions.

He majored in Electrical Engineering and Automation in College of Electronic Information and Automation, Tianjin Univ-ersity of Science and Technology. He learned computer vision and machine learning and other related knowledge, and achieved certain results in relevant professional competitions.

Mr. Zilong Liu

He majored in Machinery and Electronics Engineering in College of Mechanical Engineering, Tianjin University of Science and Technology. He learned Mechanical Control Engineering and other related knowledge, and achieved certain results in relevant professional competitions.

He majored in Machinery and Electronics Engineering in College of Mechanical Engineering, Tianjin University of Science and Technology. He learned Mechanical Control Engineering and other related knowledge, and achieved certain results in relevant professional competitions.

REFERENCES

Cite this article

TY - JOUR AU - Longyu Gao AU - Fengzhi Dai AU - Zhiqing Xiao AU - Jiangyu Wu AU - Zilong Liu PY - 2021 DA - 2021/12/27 TI - A design of Intelligent Public Trash Can based on Machine Vision and Auxiliary Sensors JO - Journal of Robotics, Networking and Artificial Life SP - 273 EP - 277 VL - 8 IS - 4 SN - 2352-6386 UR - https://doi.org/10.2991/jrnal.k.211108.009 DO - 10.2991/jrnal.k.211108.009 ID - Gao2021 ER -